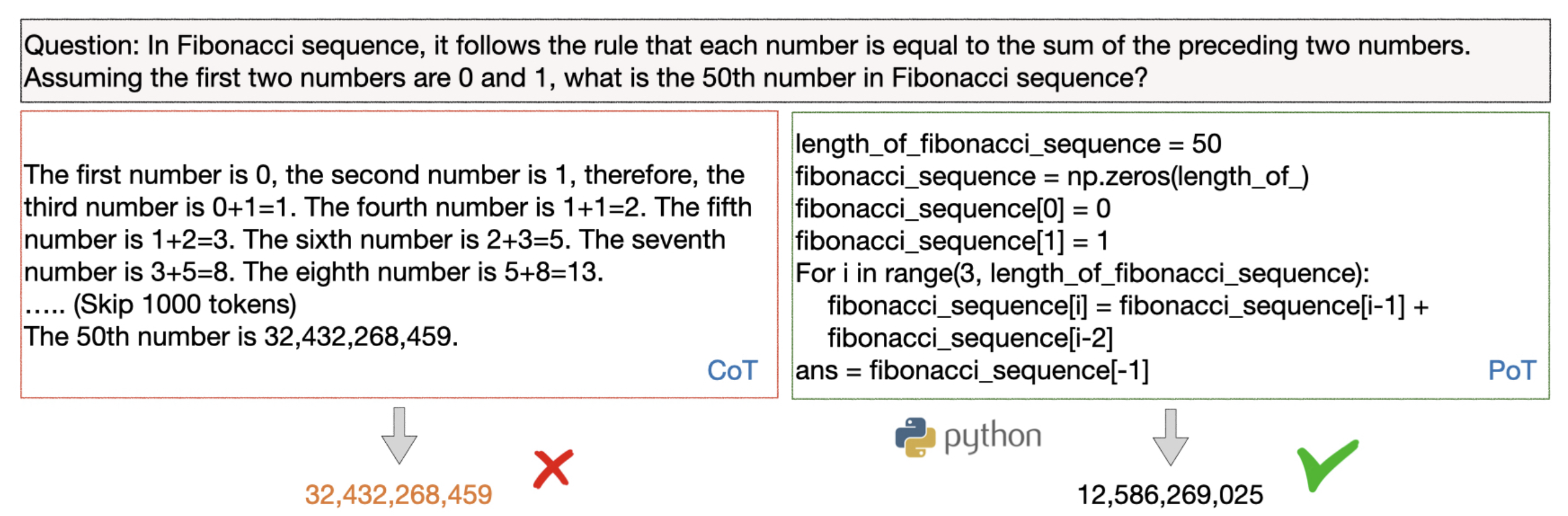

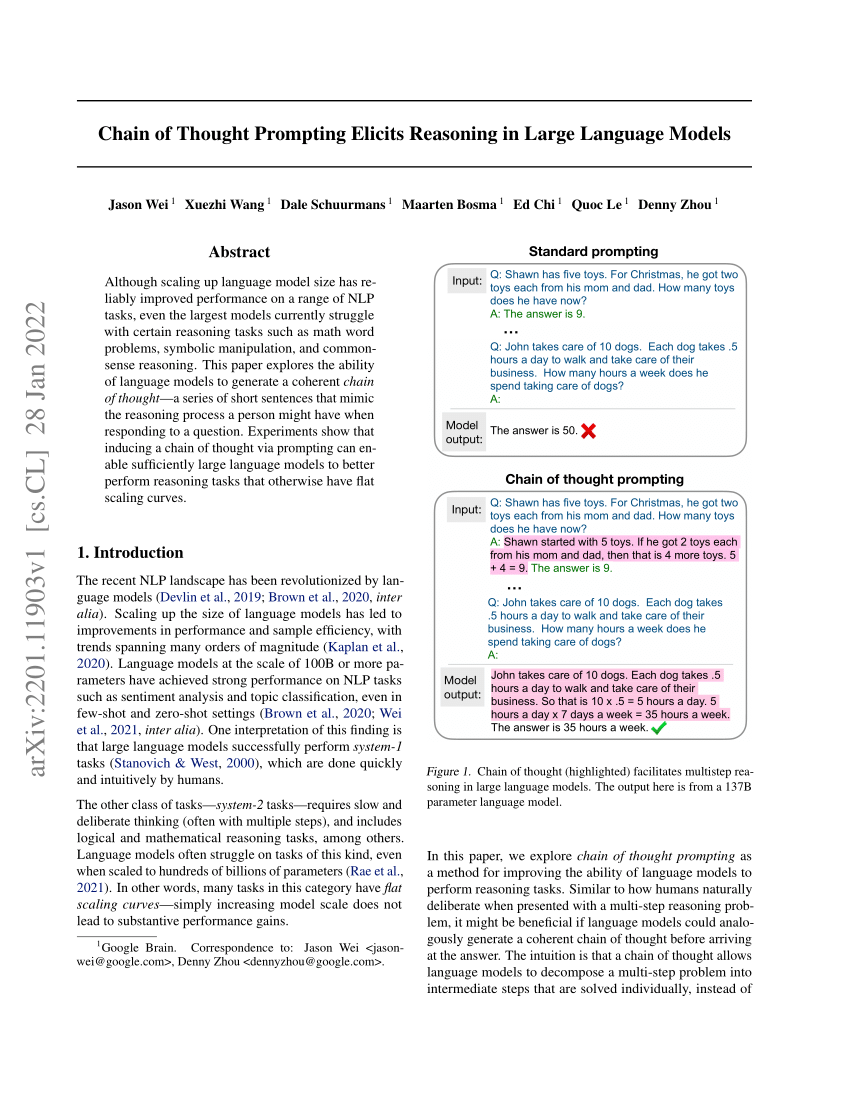

Denny Zhou on Twitter: "A key point in the chain-of-thought prompting paper: RIP downstream-task finetuning on LLMs." / Twitter

Language: Train of thought vs. chain of thought. Which is older and which more popular? Do their usages differ in terms of formality etc.? - Quora